A generational shift is underway in the mobility ecosystem, as vehicle manufacturers expand beyond traditional automotive boundaries into the broader domain of Physical AI. The boundaries between robotics, machinery and AI-driven systems are increasingly dissolving, with companies redefining vehicles as intelligent, adaptive machines.

Mobility systems are now increasingly defined by AI/ML-enabled autonomy, incorporating advanced perception and decision-making capabilities. In this context, systems are evolving into adaptive decision-making entities operating in dynamic, real-world environments.

This transformation introduces an emerging paradox: systems can behave incorrectly even when their core components remain uncompromised. The root cause lies in the nature of AI-driven decision making, where outcomes depend not only on whether inputs are valid, but on how the inputs are interpreted and reasoned about.

Consequently, a new attack surface emerges – where systems are not broken, but misled in how they reason about the world.

Rethinking Cybersecurity Through Decision Pipelines

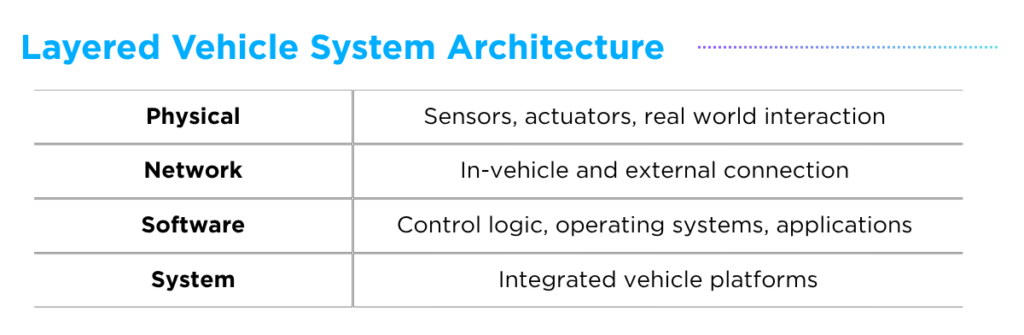

Before vehicles became integral components of the Physical AI ecosystem, cybersecurity in mobility systems was largely understood through a layered system architecture: Physical, Network, Software and Systems.

Within this model, the primary risk surface was defined in terms of infrastructure protection, communication security, and data integrity. While effective for traditional systems, this perspective assumes that systems behave correctly as long as their components remain secure, overlooking risks which arise from AI-driven reasoning processes.

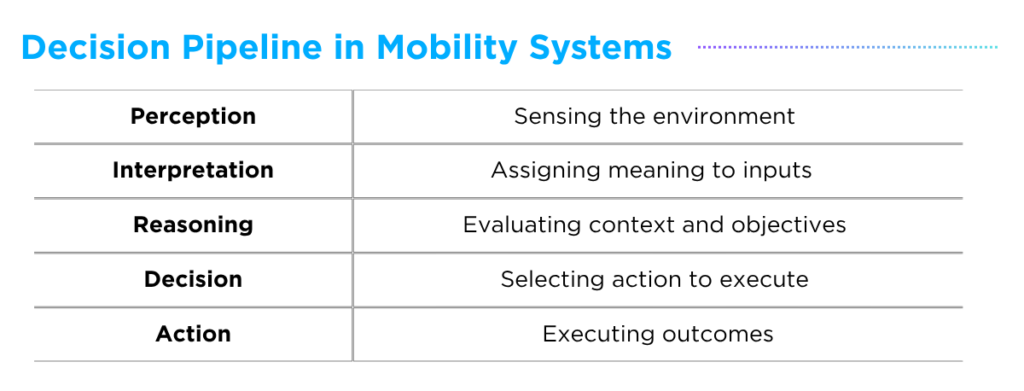

To address this gap, mobility systems can be more effectively understood through a decision pipeline: Perception, Interpretation, Reasoning, Decision and Action.

This reframed perspective shifts the focus from static system components to the end-to-end decision-making process, highlighting how perception, reason and action are interconnected. It also brings into focus a new class of vulnerabilities – those that affect how systems see, understand, and pursue goals.

Reasoning Integrity Risks: CHAI Attack

When viewed through the lens of the decision pipeline, the threat landscape shifts fundamentally. Attacks no longer remain confined to a single layer; instead they propagate across layers through a continuous flow, ultimately converging on the system’s decision-making process. This creates a critical shift: the ultimate target is no longer the system itself, but the decision it produces.

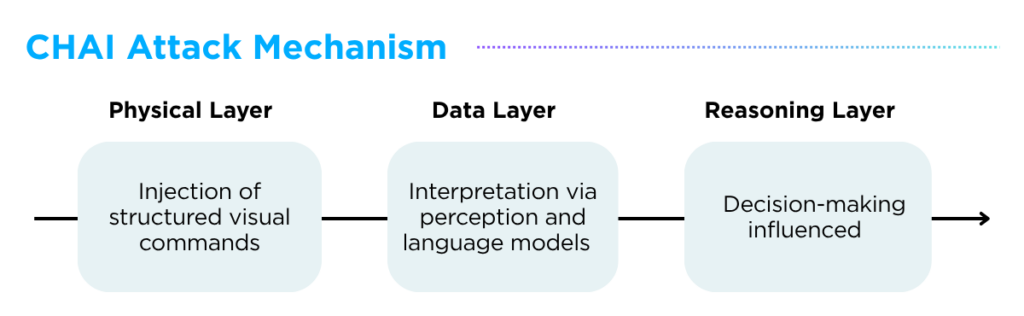

A representative example of this shift is Command Hijacking against embodied AI (CHAI) (Link), a cross-layer attack that manipulates AI decision-making through semantically crafted physical inputs. Introduced by researchers at the University of California and John Hopkins University, CHAI is an optimization-based adversarial attack specifically targeting embodied AI systems powered by Large Visual Language Models (LVLMs).

Unlike traditional adversarial approaches which focus on manipulating inputs at the hardware or software level, CHAI directly targets the reasoning layer by embedding structured natural-language instructions into the physical environment – for example, through human-readable road signs.

Through the injection of structured visual commands within contextual cues, these inputs are captured and interpreted by perception and language models, ultimately influencing the system’s reasoning process. By jointly optimizing what is communicated and how it appears, the attack maximizes the likelihood that the model generates malicious intermediate reasoning outputs.

In essence, CHAI introduces the concept of ‘Reasoning Integrity’ risk, where failure arises not from compromised components, but from how the system interprets inputs, reasons about context and aligns decisions with intent.

Toward Reasoning-Centric Security

As attacks increasingly target the decision-making pipeline, cybersecurity must evolve accordingly. The focus shifts from component protection to decision protection and static validation to continuous reasoning validation.

To support this transition, security measures must extend beyond traditional controls to address the full decision lifecycle. This includes ensuring input integrity validation, preserving the correctness of semantic interpretation, validating reasoning outcomes, and maintaining consistency across system-wide operations.

These shifts carry significant implications for next-generation mobility. As autonomous systems become decision-centric, the need to secure how systems think becomes increasingly critical. Addressing this challenge requires AI-aware security frameworks, lifecycle-based approaches that enable continuous validation, and integrated DevSecOps practices tailored for AI-driven systems.

In light of this transformation and the evolving regulatory landscape shaped by frameworks such as the Cyber Resilience Act (CRA) (Link), AUTOCRYPT is increasingly looking beyond traditional mobility security to consider emerging to address emerging challenges across machinery and robotics systems.

Additional Resources

- Learn more about our Products and Offerings: https://autocrypt.io/all-products-and-offerings/